|

|

|

|

Publications: |

||

|

Here are my publications indexed by Google Scholar, DBLP Bibliography, Research Gate, ACM Portal, and IEEE Xplore. For full publication list, please check my Curriculum Vitae. Selected research topics and the related publications are listed below: |

||

Robotic Planning: |

||

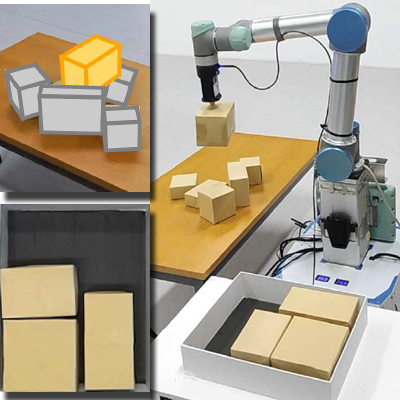

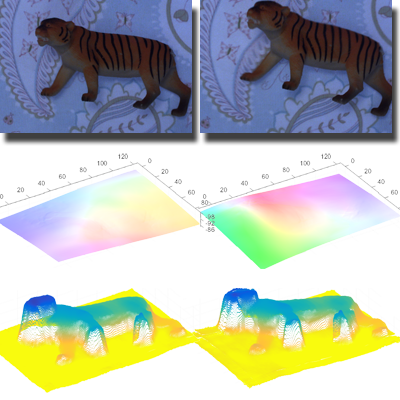

Robotic Arm PackingWe present a novel learning framework to solve the object packing problem in 3D. It constitutes a full solution pipeline from partial observations of input objects via RGBD sensing and recognition to final box placement, via robotic motion planning, to arrive at a compact packing in a target container. The technical core of our method is a neural network for TAP, trained via reinforcement learning (RL), to solve the NP-hard combinatorial optimization problem. Papers: SigAsia 2023 |

|

|

|

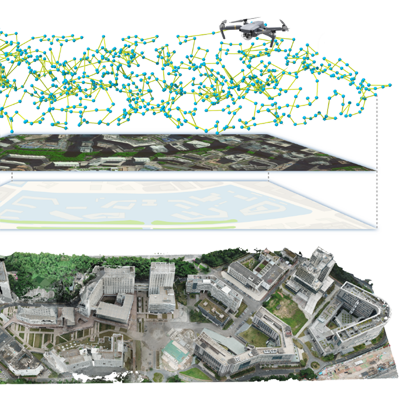

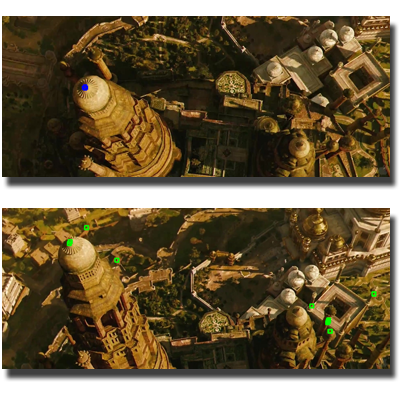

Aerial Path Planning for 3D ReconstructionWe present an adaptive aerial path planning algorithm that can be done before the site visit. Using only a 2D map and a satellite image of the to-be-reconstructed area, we first compute a coarse 2.5D model for the scene based on the relationship between buildings and their shadows. A novel Max-Min optimization is then proposed to select a minimal set of viewpoints that maximizes the reconstructability under the the same number of viewpoints. Papers: SigAsia 2020, SigAsia 2021 |

|

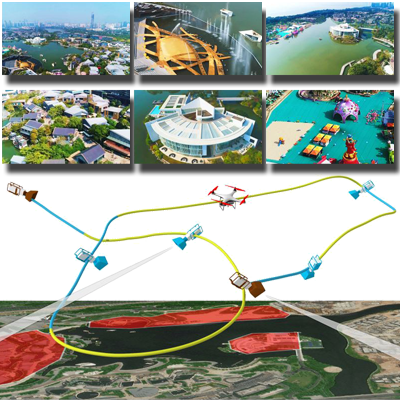

Drone VideographyWe propose a higher level tool designed to enable even novice users to easily capture compelling aerial videos of large-scale outdoor scenes. Using a coarse 2.5D model of a scene, the user is only expected to specify starting and ending viewpoints and designate a set of landmarks, with or without a particular order. Our system automatically generates a diverse set of candidate local camera moves for observing each landmark, which are collision-free, smooth, and adapted to the shape of the landmark. Papers: CGF 2016, Siggraph 2018 |

|

|

|

Quality-driven AutoscanningWe present a quality-driven autonomous scanning method that places the scanner at strategically selected Next-Best-Views to ensure progressively capturing the geometric details of the object, until both completeness and high fidelity are reached. The technique that we present is based on the analysis of a Poisson field and its geometric relation with an input scan. We applied the algorithm on two different robotic platforms, a PR2 mobile robot and a one arm industry robot. Papers: SigAsia 2014 |

|

3D Shape Modeling: |

||

|

Intrusive Plant AcquisitionWe present plant acquisition approaches, which capture plants into disjoint parts that can be accurately scanned and reconstructed offline. We use the reconstructed part meshes as 3D proxies for the reconstruction of the complete plant and devisea global-to-local non-rigid registration technique that preserves specific plant characteristics. Our method is tested with sucesss on plants of various styles, appearances, and characteristics. Papers: CGF 2016, CGF 2017 |

|

Flower Modeling from a Single PhotoWe present a method for reconstructing flower models from a single photograph. Flower head typically consists of petals embedded in 3D space that share similar shapes and form certain level of regular structure. Our technique employs these assumptions and allows users to quickly generate a variety of realistic 3D flowers from photographs and to animate an image using the underlying models reconstructed from our method. Papers: EG 2014 |

|

|

|

Reconstruction from Incomplete Point CloudWith significant data missing in a point scan, reconstructing a complete surface with geometric and topological fidelity fully automatically is highly challenging. Directly editing data in 3D is tedious, but working with 1D entities is a lot more manageable. Our interactive reconstruction iterates between user edits and morfit to converge to a desired final surface. We demonstrate various interactive reconstructions from point scans with sharp features and significant missing data. Papers: SigAsia 2014 |

|

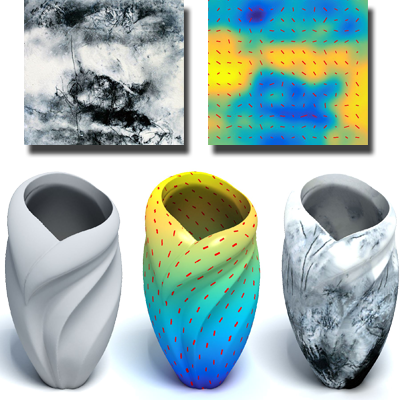

Field-Guided Registration for Shape CompositionWe present an automatic shape composition method to fuse two shape parts which may not overlap and possibly contain sharp features. At the core of our method is a novel field-guided approach to automatically align two input parts in a feature-conforming manner. The key to our field-guided shape registration is a natural continuation of one part into the ambient field as a means to introduce an overlap with the distant part, which then allows a surface-to-field registration. Papers: SigAsia 2012 |

|

|

|

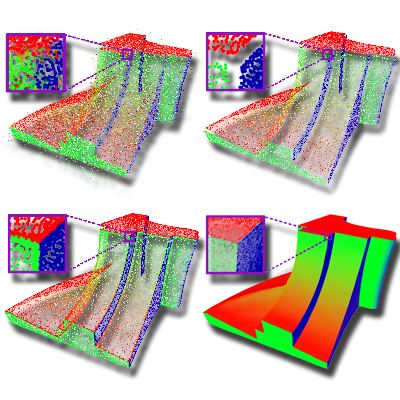

Edge-Aware Point Set ResamplingWe propose a resampling approach to process a noisy and possibly outlier-ridden point set in an edge-aware manner. The key idea is to first resample away from the edges so that reliable normals can be computed, and then progressively resample the point set while approaching the edge singularities. Results show that the algorithm is capable of producing consolidated point sets with noise-free normals and clean preservation of sharp features. Papers: TOG 2013 |

|

3D Shape Analysis: |

||

|

Deep Points ConsolidationThis work presents a consolidation method that is based on a new representation of 3D point sets. The key idea is to augment each surface point into a deep point by associating it with an inner point that resides on the meso-skeleton, which consists of a mixture of skeletal curves and sheets. We demonstrate the advantages of the deep points consolidation technique by employing it to consolidate and complete noisy point-sampled geometry with large missing parts. Papers: SigAsia 2015 |

|

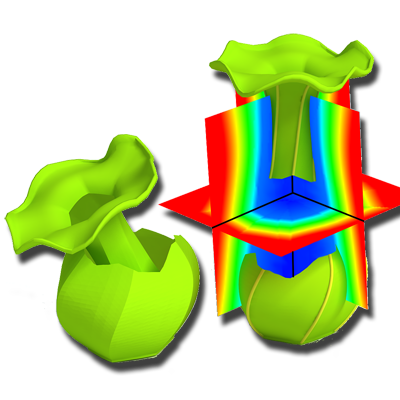

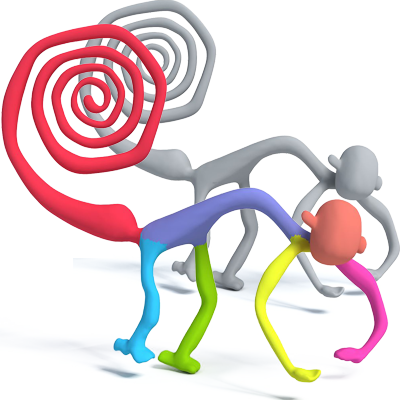

Generalized Cylinder DecompositionDecomposing a complex shape into geometrically simple primitives is a fundamental problem in geometry processing. We introduce a quantitative measure of cylindricity for a shape part and develop a cylindricitydriven optimization algorithm, with a global objective function, for generalized cylinder decomposition. We demonstrate results of our optimal decomposition algorithm on numerous examples and compare with other alternatives. Papers: SigAsia 2015 |

|

|

|

Mobility-Trees for Indoor Scenes ManipulationThis work introduces the mobility-tree construct for high-level functional representation of complex 3D indoor scenes. We analyze the reoccurrence of objects in the scene and automatically detect their functional mobilities based on their spatial arrangements, repetitions and relations with other objects. Repetitive motions in the scenes are grouped in mobility-groups, for which we develop a set of controllers facilitating semantical high-level editing operations. Papers: CGF 2013 |

|

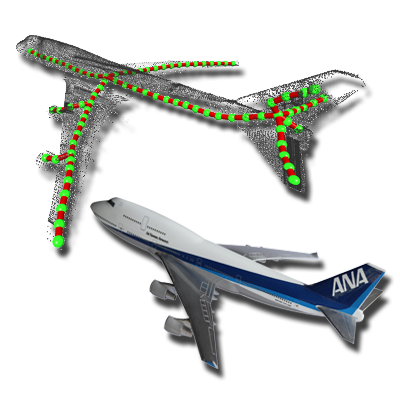

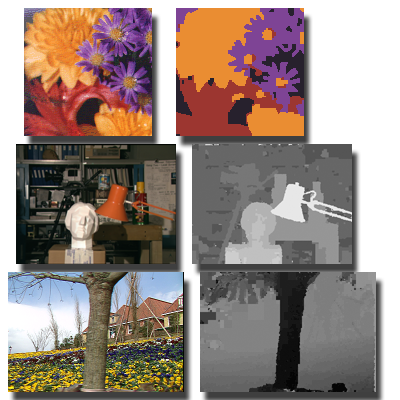

Projective Analysis for 3D Shape SegmentationWe introduce projective analysis for semantic segmentation and labeling of 3D shapes. An input 3D shape is treated as a collection of 2D projections, which are labeled through supervised learning using selected training images. Projective analysis simplifies the processing task by working in a lower-dimensional space. We demonstrate semantic labeling of imperfect 3D models which are otherwise difficult to analyze without utilizing 2D training data. Papers: SigAsia 2013 |

|

|

|

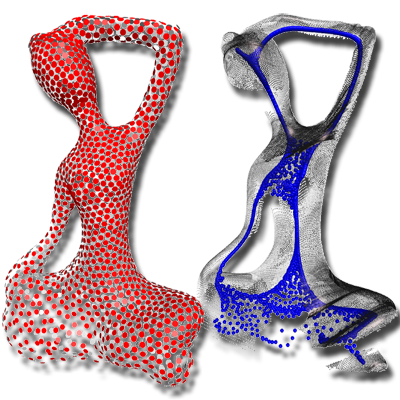

L1-Medial Skeleton of Point CloudThe L1-median is well-known as a robust global center of an arbitrary set of points. We develop a L1-medial skeleton construction algorithm, which can be directly applied to an unoriented raw point scan with significant noise, outliers, and large areas of missing data. We demonstrate L1-medial skeletons extracted from raw scans of a variety of shapes, including those modeling highgenus 3D objects, plants, and curve networks. Papers: Siggraph 2013 |

|

Image and Video Synthesis: |

||

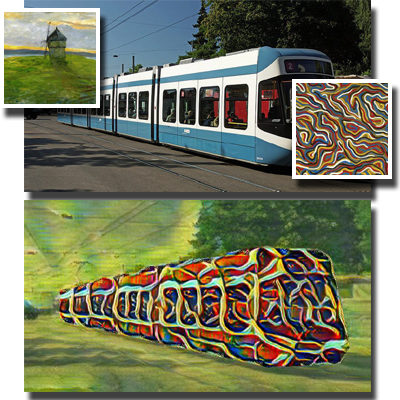

Nerual Networks for Arbitrary Style TransferExisting state-of-the-art approaches often try to generate the stylized result in a single shot and hence fail to fully satisfy the constraints on semantic structures in the content images and style patterns in the style images. We propose a self-correcting model to predict what is wrong with the current stylized result and refine it iteratively. For each refinement, we transit the error features across both the spatial and scale domain and invert the processed features into a residual image, with a network we call Error Transition Network (ETNet). Papers: NeurIPS 2019, AAAI 2020 |

|

|

|

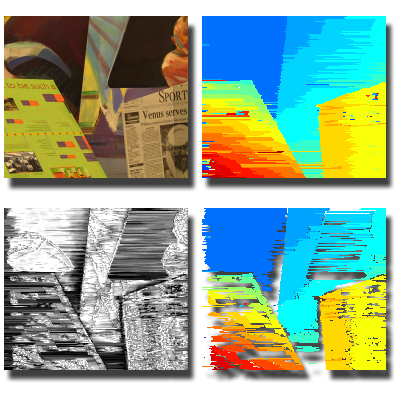

Dual Learning for Image-to-Image TranslationWe develop a novel dual-GAN mechanism, which enables image translators to be trained from two sets of unlabeled images from two domains. In our architecture, the primal GAN learns to translate images from domain U to those in domain V, while the dual GAN learns to invert the task. The closed loop made by the primal and dual tasks allows images from either domain to be translated and then reconstructed. Experiments on multiple image translation tasks show considerable performance gain over a single GAN. Papers: ICCV 2017 |

|

Controlled Synthesis of Inhomogeneous TexturesWe propose a new method for automatic analysis and controlled synthesis of inhomogeneous textures. Given an input texture exemplar, our method generates a source guidance map comprising: (i) a scalar progression channel that attempts to capture the low frequency spatial changes in color, lighting, and local pattern combined, and (ii) a direction field that captures the local dominant orientation of the texture. Users can then exercise better control over the synthesized result by providing easily specified target guidance maps. Papers: EG 2017 |

|

|

|

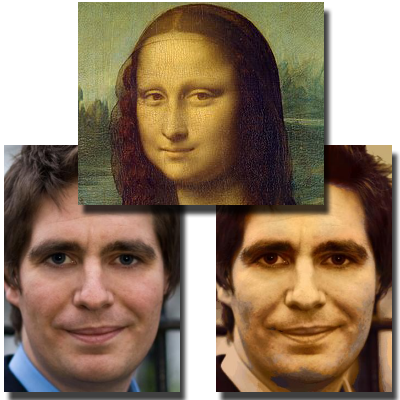

Face Photo StylizationWe present a unified framework for fully automatic face photo stylization based on a single style exemplar. Constrained by the �single-exemplar� condition, where the numbers and varieties of patch samples are limited, we introduce flexibility in sample selection while preserving identity and content of the input photo. Experimental results demonstrate compelling visual effects and notable improvements over other state-of-the-art methods which are adapted for the same task. Papers: TVCJ 2017 |

|

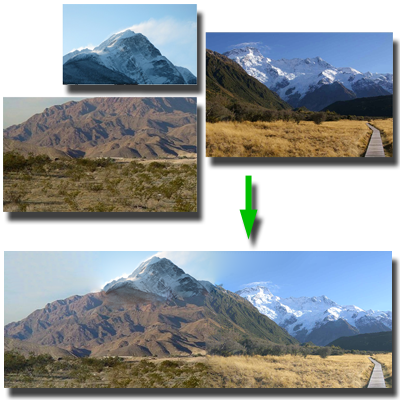

Structure-Driven Image CompletionWe propose a method that starts with a set of sloppily pasted image pieces with gaps between them, extracts salient curves that approach the gaps, and uses corresponding curve pairs to guide a novel tele-registration method that simultaneously aligns all the pieces together. A structure-driven image completion technique is then proposed to fill the gaps, allowing the subsequent employment of standard in-painting tools to finish the job. Papers: SigAsia 2013 |

|

|

|

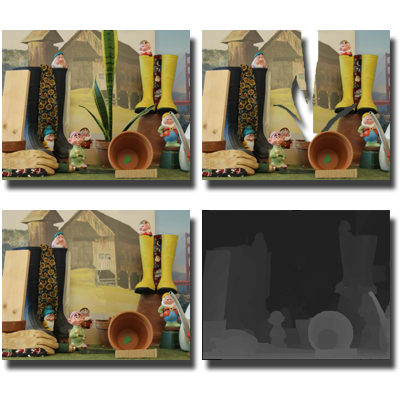

Video StereolizationWe present a semiautomatic system that converts conventional videos into stereoscopic videos by combining motion analysis with user interaction. Besides structure from motion techniques, we develop two new methods that analyze the optical flow to provide additional qualitative depth constraints. We further developed a quadratic programming approach to incorporate both quantitative depth and qualitative depth to recover dense depth maps for all frames. Papers: TVCG 2012 |

|

Stereoscopic InpaintingWe present a novel algorithm for simultaneous color and depth inpainting. The algorithm takes stereo images and estimated disparity maps as input and fills in missing color and depth information introduced by occlusions or object removal. We demonstrate the effectiveness of the proposed algorithm on several challenging data sets. Papers: CVPR 2008 |

|

|

|

Layer-based MorphingThe warp functions used in most image morphing algorithms are one-to-one. Hence, when some features are visible in only one of the reference images, "ghosting" problems will appear. In addition, since the control features have global effects, there is no way to control different objects in the scene separately. To address these problems, a layer-based morphing approach is porposed. Multiple layers are created and are used to solve the visibility problem. Papers: GM 2001 |

|

Image Analysis: |

||

|

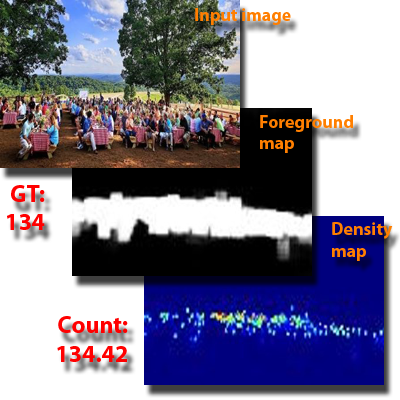

Learning-based Crowd CountingNovel network architectures have been devised to accurately count the number of people in crowded scenes. These architectures address the longstanding challenge of accuracy degradation caused by significant scale variations, density disparities, and complex backgrounds. Furthermore, the latest approach can be trained effectively using weak supervisory signals, eliminating the need for precise location-level annotations. Papers: TMM 2023, PR 2023 |

|

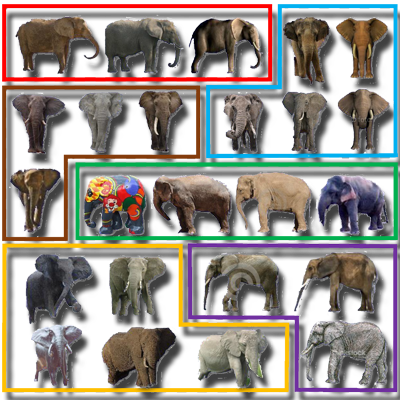

Distilled Collections from Textual Image QueriesWe present a distillation algorithm which operates on a large, unstructured, and noisy collection of internet images returned from an online object query. We introduce the notion of a distilled set, which is a clean, coherent, and structured subset of inlier images. In addition, the object of interest is properly segmented out throughout the distilled set. Our approach is unsupervised, built on a novel clustering scheme, and solves the distillation and object segmentation problems simultaneously. Papers: EG 2015 |

|

|

|

Hierarchical Color Image SegmentationExisting fuzzy c-partition entropy approaches generally have two limitations, i.e., partition number needs to be manually tuned for different input and the methods can process grayscale images only. To address these two limitations, an unsupervised multilevel segmentation algorithm is presented. The experimental results demonstrate the presented hierarchical segmentation scheme can efficiently segment both grayscale and color images. Papers: PR 2014, PR 2017 |

|

Refractive Imaging: |

||

|

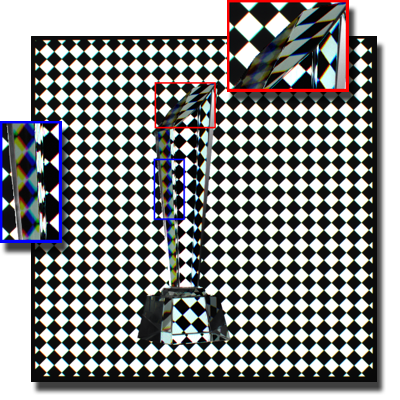

Transparent Object ModelingNone of the existing 3D reconstruction techniques can be directly applied to transparent objects. We present a fully automatic approach for reconstructing complete 3D shapes of transparent objects under three constraints: surface and refraction normal consistency, surface projection and silhouette consistency, and surface smoothness. Experimental results demonstrate that our method can successfully recover the shapes of transparent objects and reproduce their light refraction properties. Papers: Siggraph 2018 |

|

Water Surface ReconstructionWe present the first approach for simultaneously recovering the 3D shape of both the wavy water surface and the moving underwater scene. A portable camera array system is constructed, which captures the scene from multiple viewpoints above the water. The correspondences across these cameras are estimated using an optical flow method and are used to infer the shape of the water surface and the underwater scene. Papers: CVPR 2017, ECCV 2018 |

|

|

|

3D Reconstruction of Transparent ObjectsEstimating the shape of transparent and refractive objects is one of the few open problems in 3D reconstruction. Under the assumption that the rays refract only twice when traveling through the object, we present the first approach to simultaneously reconstructing the 3D positions and normals of the object�s surface at both refraction locations. Experimental results using both synthetic and real data demonstrate the robustness and accuracy of the proposed approach. Papers: CVPR 2016 |

|

Frequency-based Environment MattingWe propose a novel approach to capturing and extracting the matte of a real scene effectively and efficiently. By exploiting the recently developed compressive sensing theory, we simplify the data acquisition process of frequency-based environment matting. Compared with the state-of-the-art method, our approach achieves superior performance on both synthetic and real data, while consuming only a fraction of the processing time. Papers: ICCV 2015 |

|

|

|

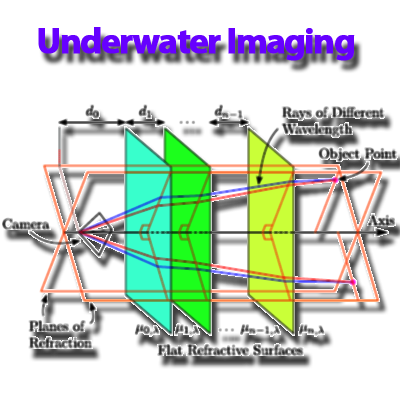

Underwater Stereo and ImagingIn underwater imagery, refractions occur when light passes from water into the camera housing. We extend the existing work on physical refraction models by considering the dispersion of light, and derive new constraints on the model parameters for use in calibration. This leads to a novel calibration method that achieves improved accuracy compared to existing work. Papers: CVPR 2013 |

|

3D Human Digitalization: |

||

|

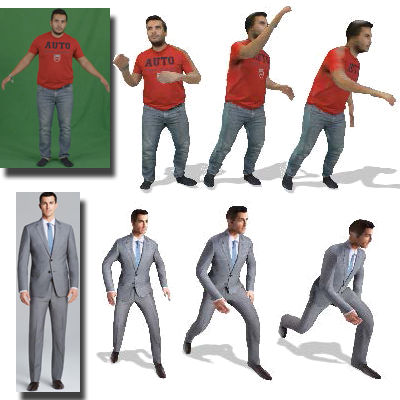

Avatar Modeling From Sparse RGBD ImagesWe propose a novel approach to reconstruct 3D human body shapes based on a sparse set of RGBD frames using a single RGBD camera. We specifically focus on the realistic settings where human subjects move freely during the capture, and address the challenge of how to robustly fuse these sparse frames into a canonical 3D model, under pose changes and surface occlusions. Papers: TMM 2021, ECCV 2022 |

|

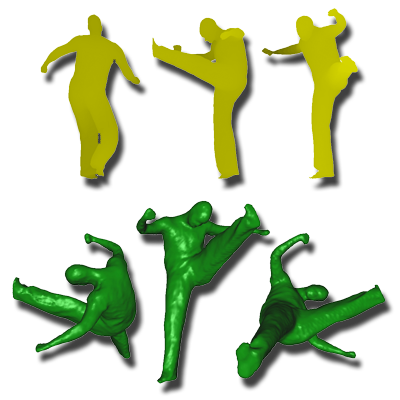

Conditioned Generation of 3D Human MotionsGiven a prescribed action type, we aim to generate plausible human motion sequences in 3D. Importantly, the set of generated motions are expected to maintain its diversity to be able to explore the entire action-conditioned motion space; meanwhile, each sampled sequence faithfully resembles a natural human body articulation dynamics. We also propose a temporal Variational Auto-Encoder (VAE) that encourages a diverse sampling of the motion space. Papers: MM 2020, IJCV 2022 |

|

|

|

Modeling of Deformable Human ShapesWe propose a novel approach to reconstruct complete 3D deformable models over time using a single depth camera. The core of this algorithm is based on the assumption that the deformation is continuous and predictable in a short temporal interval. Our experiment shows that this approach is able to reconstruct visually plausible 3D surface deformation results with a single camera. Papers: ICCV 2009 |

|

Foreground Segmentation and Matting: |

||

|

Real-time Video MattingThis project presents the 1st real-time matting algorithm that can handle live videos with natural backgrounds. The algorithm is based on a set of novel Poisson equations that are derived for handling multichannel color vectors, as well as the depth information captured. Quantitative evaluation shows that the presented algorithm is comparable to state-of-the-art offline image matting techniques in terms of accuracy. Papers: GI 2010, IJCV 2012 |

|

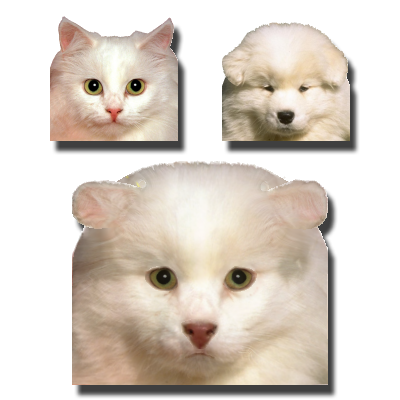

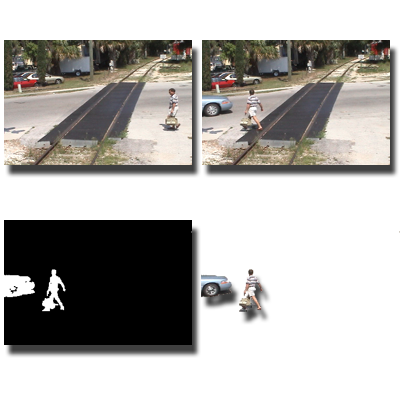

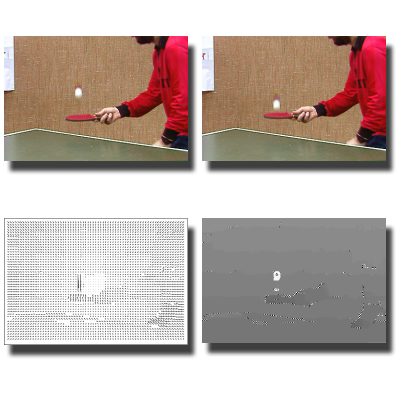

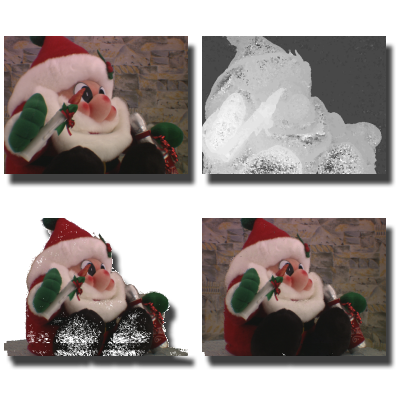

Foreground Segmentation for Live VideosAn easy to implement and simple to use algorithm is proposed for processing live video in real-time. The algorithm can handle a variety of challenges such as dynamic background, fuzzy object boundaries, camera motion, topology changes, and low color contrast. It has applications in video matting, video conferencing, and automatic background subtraction. Papers: CVPR 2011, TIP 2015 |

|

|

|

Background Subtraction from Dynamic ScenesThis project addresses the problem of foreground separation from the background modeling perspective. In particular, we deal with the difficult scenarios where the background texture might change spatially and temporally. Empirical experiments on a variety of datasets show the competitiveness of the proposed approach, which works in real-time on programmable graphics cards. Papers: ICCV 2009, TIP 2011Source code: ZIP |

|

Stereo Vision: |

||

Subpixel Stereo with Slanted Surface ModelingThis project proposes a new stereo matching algorithm which takes into consideration surface orientation at the per-pixel level. Two disparity calculation passes are used. The first uses fronto-parallel surface assumption to generate an initial disparity map, from which the disparity plane orientations of all pixels are estimated. The second pass aggregates the matching costs for different pixels along the estimated disparity plane orientations using adaptive support weights. Papers: PR 2011 |

||

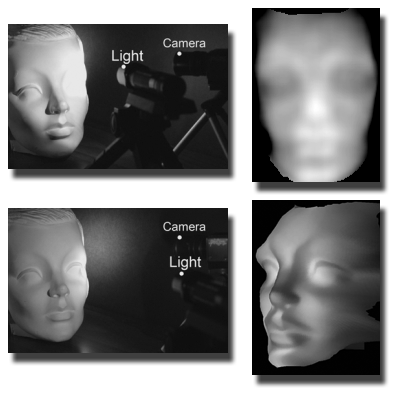

Light Fall-off StereoWe present light fall-off stereo (LFS) a new method for computing depth from scenes beyond Lambertian reflectance and texture. LFS takes a number of images from a stationary camera as the illumination source moves away from the scene. Based on the inverse square law for light intensity, the ratio images are directly related to scene depth from the perspective of the light source. Papers: CVPR 2007 |

|

|

|

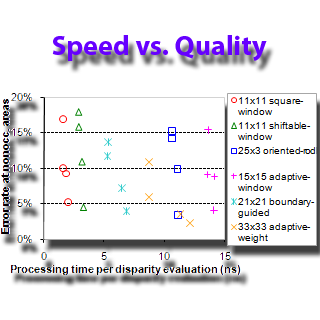

Evaluation of Cost Aggregation ApproachesThis project studies how well different cost aggregation approaches perform under the real-time constraint. Six recent cost aggregation approaches are implemented and optimized for graphics hardware so that real-time speed can be achieved. The performances of these aggregation approaches in terms of both processing speed and result quality are reported. Papers: IJCV 2007Source code: ZIP |

|

Real-time Stereo Matching on GPUsReal-time stereo estimation has many important applications in robotics, image-based modeling and rendering, etc. This project investigates how to perform stereo matching on the Graphics Processing Units (GPU) of modern programmable graphics cards. The proposed algorithm is capable of estimating disparity maps for dynamic real scenes in real time. Papers: CVPR 2005, TIP 2007 |

|

|

|

Unambiguous Matching w/ Reliability-based DPA new reliability measure is designed for evaluating stereo matches, which is defined as the cost difference between the globally best disparity assignment that includes the match, and the globally best assignment that does not include the match. A reliability-based algorithm is derived, which selectively assigns disparities to pixels when the corresponding reliabilities exceed a given threshold. Papers: ICCV 2003, TPAMI 2005 |

|

Motion Estimation: |

||

Joint Disparity and Disparity Flow EstimationA disparity flow map for a given view depicts the 3D motion observed from this view. An algorithm is developed to compute both disparity and disparity flow in an integrated process. The estimated disparity flow is used to predict the disparity for the next frame. The temporal consistency between the two frames is therefore enforced. All computations are performed in the 2D image space, leading to an efficient implementation. Papers: ECCV 2006, CVIU 2008 |

|

|

|

Large Motion Estimation Using Reliability-DPDetecting and estimating motions of fast moving objects has many important applications. Here we use reliability-based dynamic programming (DP) technique to solving large motion estimation problem. The resulting algorithm can handle sequences that contain large motions and can produce optical flows with 100% density over the entire image domain. Papers: IJCV 2006 |

|

Information Visualization: |

||

|

Organize Data into Structured LayoutGiven a set of data items and a similarity measure between each pair of them, this project studies how to position these data into a structured layout so that their proximity reflects their similarity. Different techniques are proposed to solve this problem. Results show that these techniques can facilitate users to understand the relations among the data items and to locate the desired ones during the browsing. Papers: GI 2011, TMM 2014 |

|

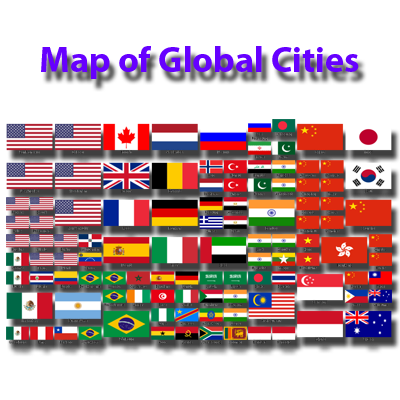

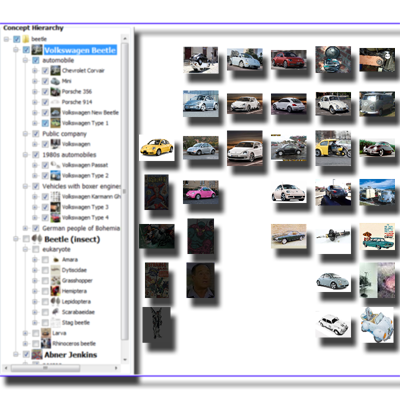

Concept-based Web Image SearchWhen users search images online, their queries are often short and potentially ambiguous. The solution we propose is to perform automatic query expansion using Wikipedia as the source knowledge base, resulting in a diversification of the search results. The outcome is a broad range of images that represent the various possible interpretations of the query, which are then organized using both conceptual and visual information to assist users in finding the desired images. Papers: JETWI 2012, IP&M 2013, JAIHC 2013 |

|

|

|

Similarity-based Image BrowsingWe study how to facilatate users to locate the desired photos. Using extracted feature vectors, our approach maps photos onto a 2D virtual canvas so that similar ones are close to each other. When a user browses the collection, a subset of photos is automatically selected to compose a photo collage. Once having identified photos of interest, the user can find more photos with similar features through panning and zooming, which dynamically update the photo collage. Papers: CIVR 2009, IVC 2011 |

|

Rendering: |

||

|

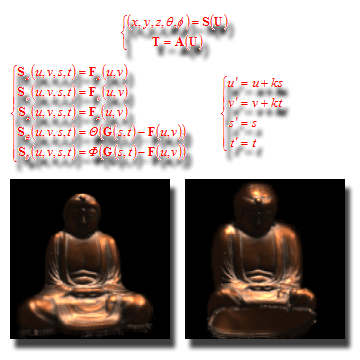

Rayset: A Taxonomy for Image-Based RenderingA rayset is a parametric function, which consists of two mapping relations. The first one maps from a parameter space to the ray space, while the second one maps from the parameter space to the attribute space. A taxonomy is proposed based on the rayset concept, using which scene representations and scene reconstruction techniques used in image-based rendering are individually classified. Papers: GI 2001, IJIG 2006 |

|

Camera Field Rendering for Dynamic ScenesThis project studies how to render dynamic scenes using only a few sample views and estimated noisy disparity maps. To color a pixel in the novel view, a backward searching process is conducted to find the corresponding pixels from the closest four reference images. Since the computations for different pixels are independent, they can be performed in parallel on the Graphics Processing Units of modern graphics hardware. Papers: GM 2005, GI 2007 |

|

|

|

Fast Ray-NURBS Intersection CalculationA new algorithm with extrapolation process for computing the ray-NURBS intersection is presented. In the preprocessing step, the NURBS surfaces are subdivided adaptively into rational Bezier patches. The parallelepipeds are used to enclose the respective patches as tightly as possible. The improved Newton iteration with extrapolation process saves CPU time by reducing the iteration steps. The intersection scheme is faster than previous methods. Papers: C&G 1997 |

|

Evolutionary Computation: |

||

|

Artificial Multi-Bee-Colony AlgorithmA novel artificial multi-bee-colony (AMBC) algorithm is proposed for solving the k-nearest-neighbor fields (k-NNF) problem. A dedicated bee colony is used to search for the k-nearest matches for each pixel. Bee colonies at neighboring pixels communicate with each other, allowing good matches propagate across the image quickly. Results show that AMBC can find better solutions than the generalized Patch-Match algorithm does. Papers: GECCO 2016 |

|

Multiresolution GA and Its ApplicationsA new algorithm is proposed that incorporates a multi-resolution scheme into the conventional genetic algorithms (GA). The solutions of image-related problems are encoded through encoding the corresponding quadtrees, and hence, the 2D locality within the solutions can be preserved. Several computer vision problems, such as color image segmentation, stereo matching, and motion estimation, can be solved efficiently under the same framework. Papers: IJCV 2002, PR 2004 |

|

|

|

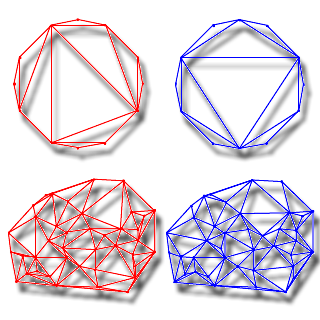

A GA for Minimum Weight TriangulationIn this project, a new method for the minimum weight triangulation of points on a plane is presented based on the rationale of genetic algorithms (GA). Polygon crossover and its algorithm for triangulations are proposed. New adaptive crossover and mutation operators are introduced. It is shown that the new method for the minimum weight triangulation can obtain more optimal results of triangulations than the greedy algorithm. Papers: ICEC 1997 |

|

| Home | Teaching | Research | Service | Personal | Vitae |